Trusted by AI builders at:

Generate high-quality test scenarios for your AI agents in minutes

Most teams ship AI agents with a handful of hand-written test cases and hope nothing breaks in production. Galtea automatically generates hundreds of use-case-specific test cases from your product specs and evaluates your AI agent against structured metrics, so manual testing becomes the exception, not the workflow.

Start for free - No credit card required

We test what your users experience. Not which model powers it.

Modern AI products are pipelines, intent detection, retrieval, reasoning, output formatting, each node potentially running a different model. Galtea evaluates the product end-to-end, so you know whether the whole system works, not just each part in isolation.

.svg)

.svg)

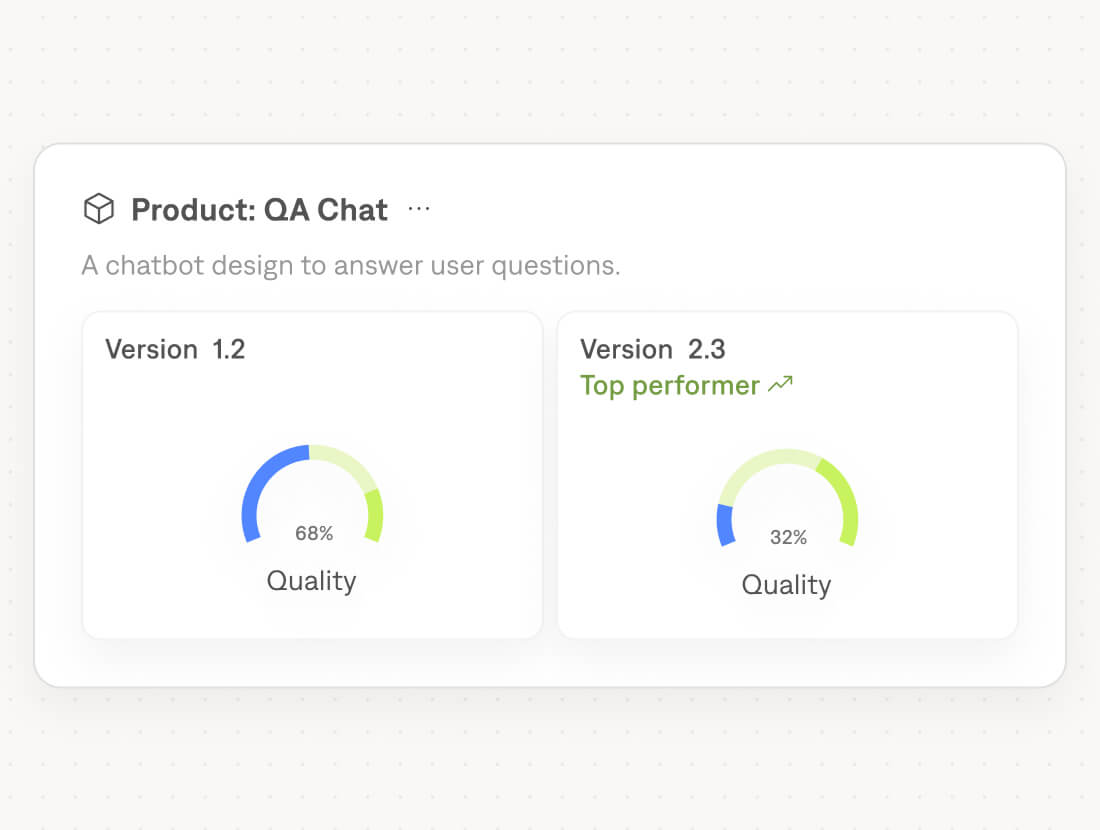

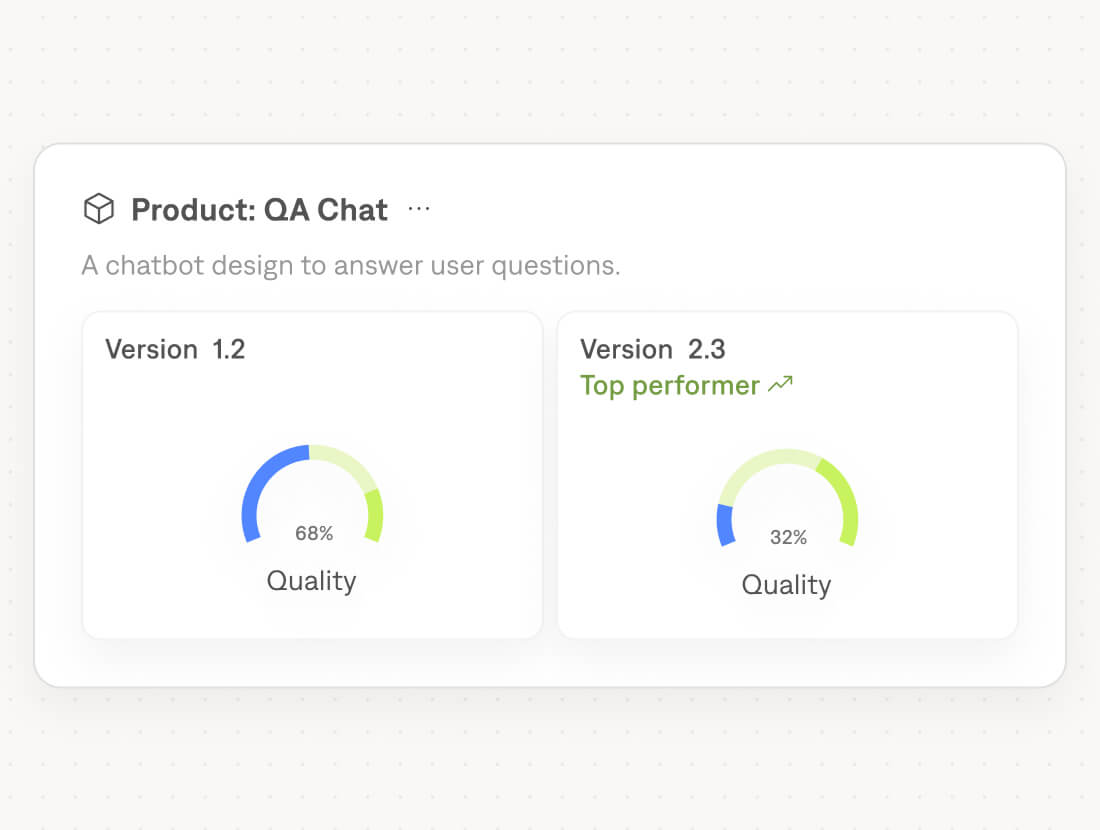

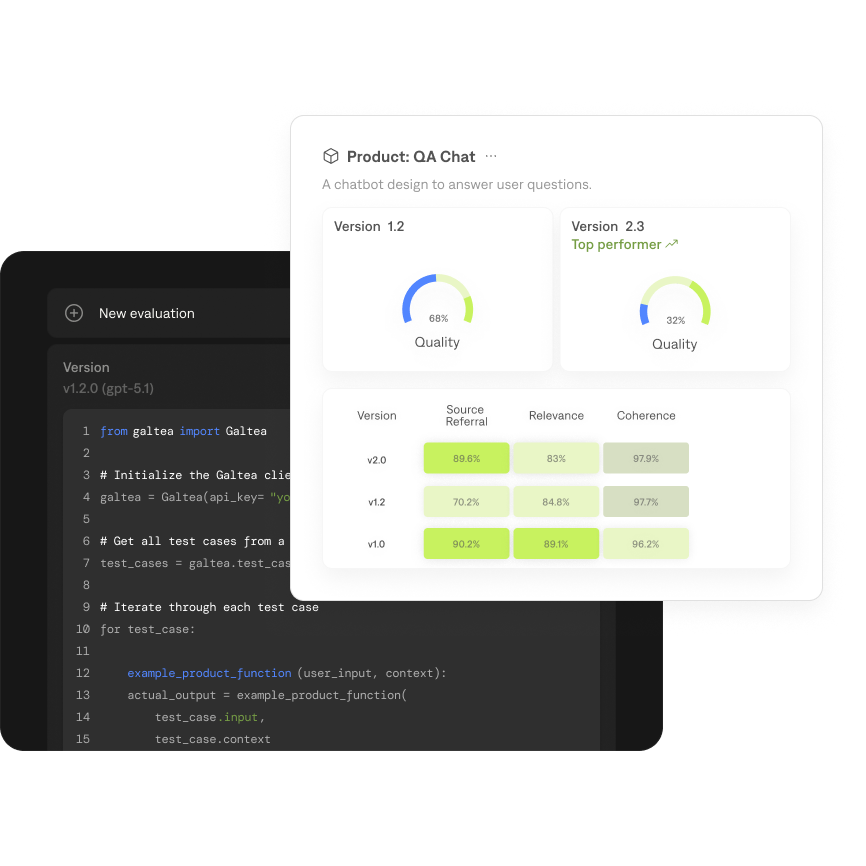

Product-level evaluation

The unit of evaluation is what users experience, not individual model calls, regardless of how many models, agents, or nodes are running underneath.

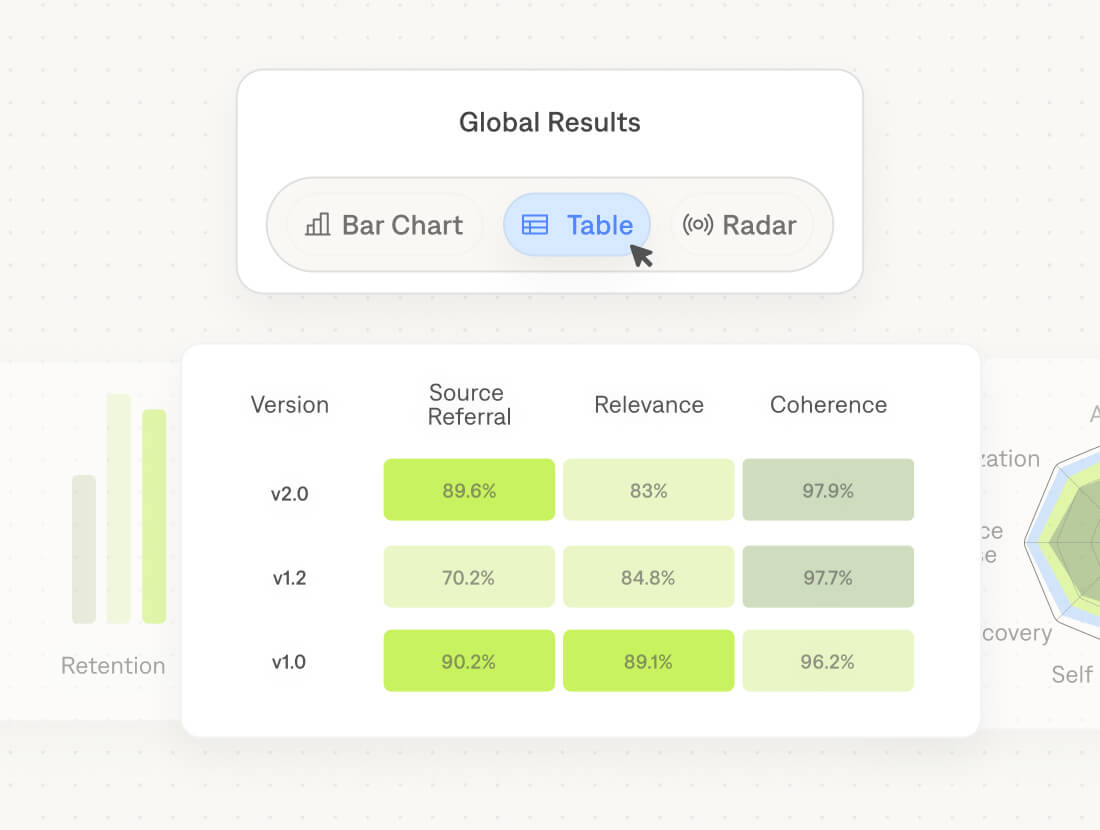

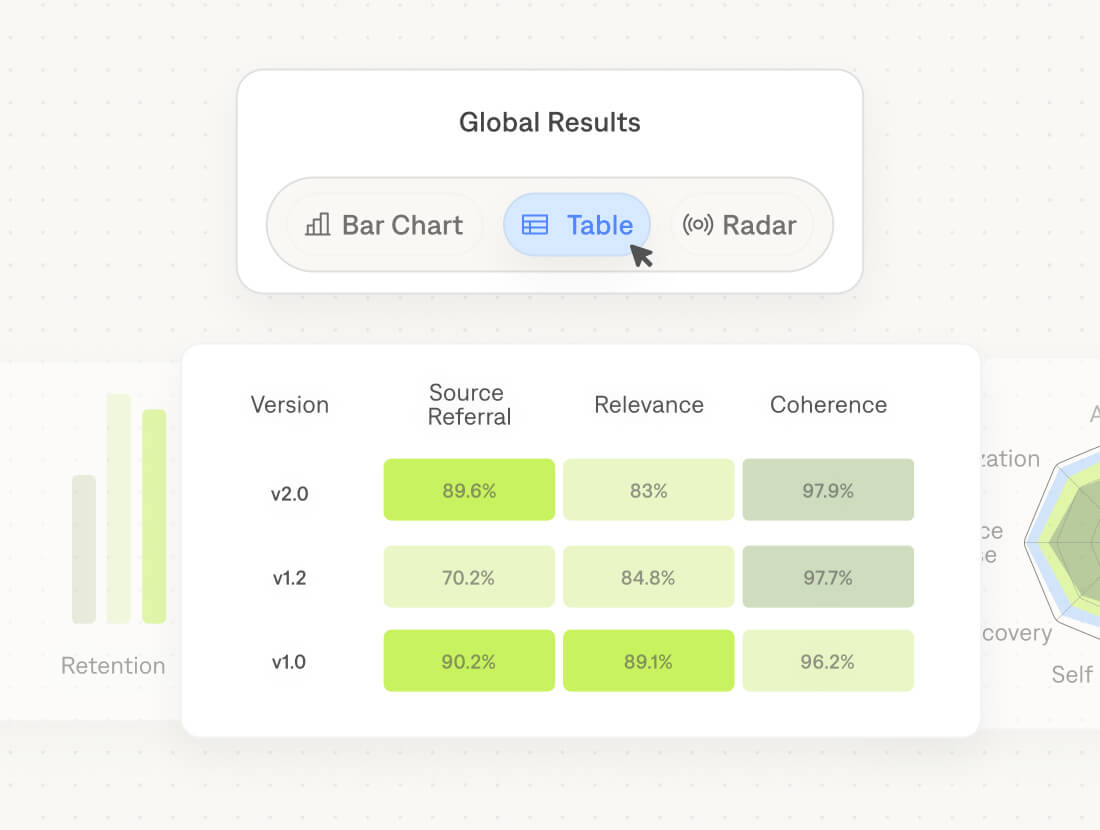

Cross-model comparison

Run the same test suite across multiple providers and compare scores side by side before committing to a model change.

Framework agnostic

LangChain, LlamaIndex, Vercel AI SDK, raw API calls. If your app calls an LLM, Galtea can evaluate it.

From 0 to hundreds of

test cases. In minutes.

test cases. In minutes.

Writing test cases by hand doesn't scale past a handful. The test suite stays at 15 rows, edge cases never make the list, and everyone ships blind. Galtea Simulations generates realistic user queries, adversarial inputs, edge cases, and synthetic user personas automatically from your system prompt. No dataset needed. No manual writing.

Realistic user queries, edge cases, and adversarial inputs

Synthetic user personas generated from your product specs

Hundreds of test cases. Zero written by hand

.png)

Every change, measured.

Every regression, caught.

Every regression, caught.

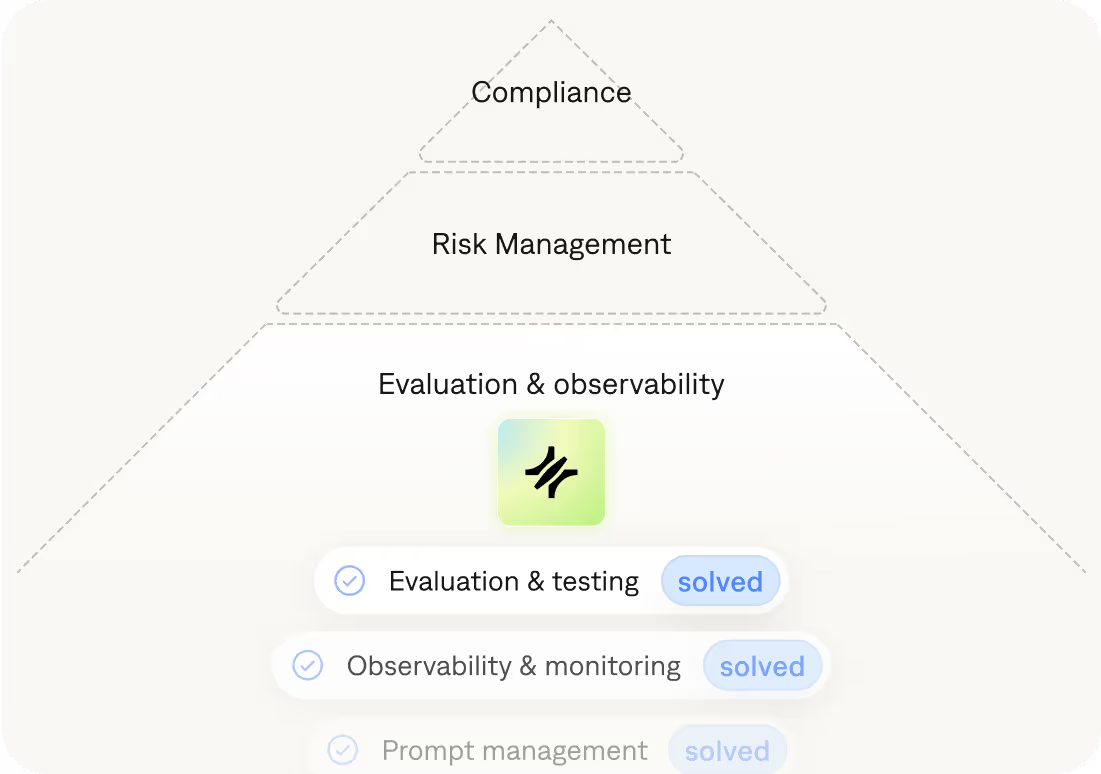

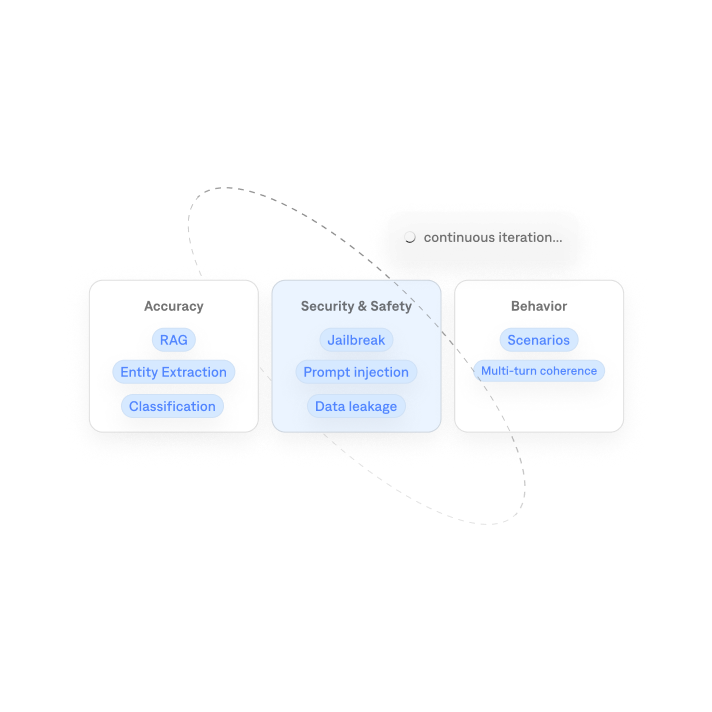

Every prompt change, model swap, or refactor ships with a question mark. There's no baseline to know if v2 is better than v1. Galtea Evaluations runs your AI against Accuracy, Security & Safety and Behavioral metrics every time you iterate, so regressions show up in your pipeline before they reach your users.

Out-of-the-box metrics calibrated against human labels

Custom metrics in under 5 min: code, LLM-as-a-judge, or human eval queues

Automatic metric suggestions based on your product specs, no need to know what to measure before you start

Observability tools show you what broke. Galtea stops it from shipping.

When a real user hits a failure, your monitoring tool tells you after it happened. That's too late. Galtea sits upstream, simulating real user interactions and adversarial inputs before deployment, so problems are found in testing, not in the wild.

MONITORING TOOLS

Runs after deployment

Real users find the bugs

Reactive: alerts after impact

Requires existing traffic

GALTEA

Runs before deployment

Simulated users find the bugs

Proactive: blocks bad deploys

Works from day one, pre-launch

Use both. Galtea for pre-production. Observability tools for production visibility. They solve different problems.

71%

Reduction in operational costs for AI validation processes.

10× ROI

Combining direct savings and regulatory risk mitigation.

+70%

Increase in team efficiency by reducing manual testing tasks.

x23.6

Improvement in vulnerability detection compared to manual processes.

01

Onboard your solution

Effortlessly integrate your AI solution into Galtea’s platform, with a smooth, guided setup.

02

Generate test data

Automatically generate hundreds of high-quality, use-case-specific test cases to simulate diverse, real-world scenarios and stress-test your AI solution’s capabilities.

03

Run evaluation tasks

Conduct automated evaluation tasks that measure system performance, compliance, and robustness, leveraging customisable metrics for accurate results.

04

Analyze results

Access real-time insights and comprehensive reports on your AI solution’s performance, uncovering strengths and where to optimise.

05

Iterate

Refine and optimise your AI solution through continuous testing and feedback, ensuring enhanced reliability and performance with every iteration.

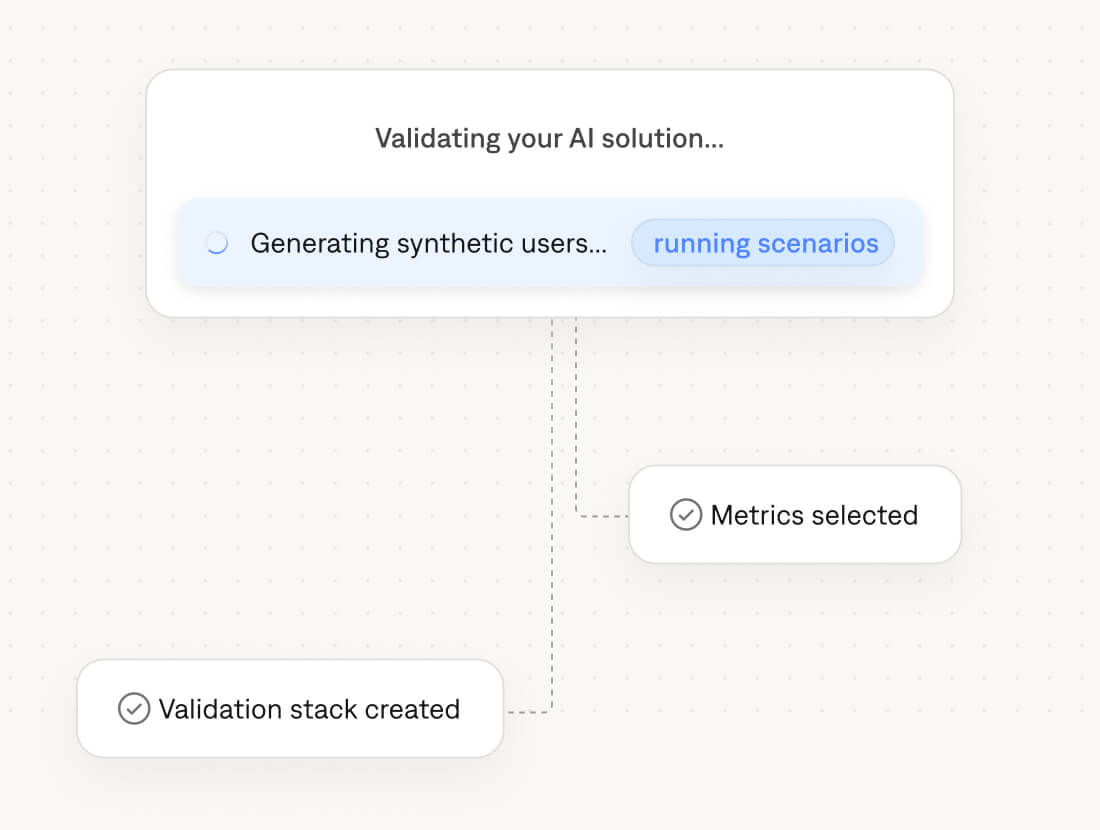

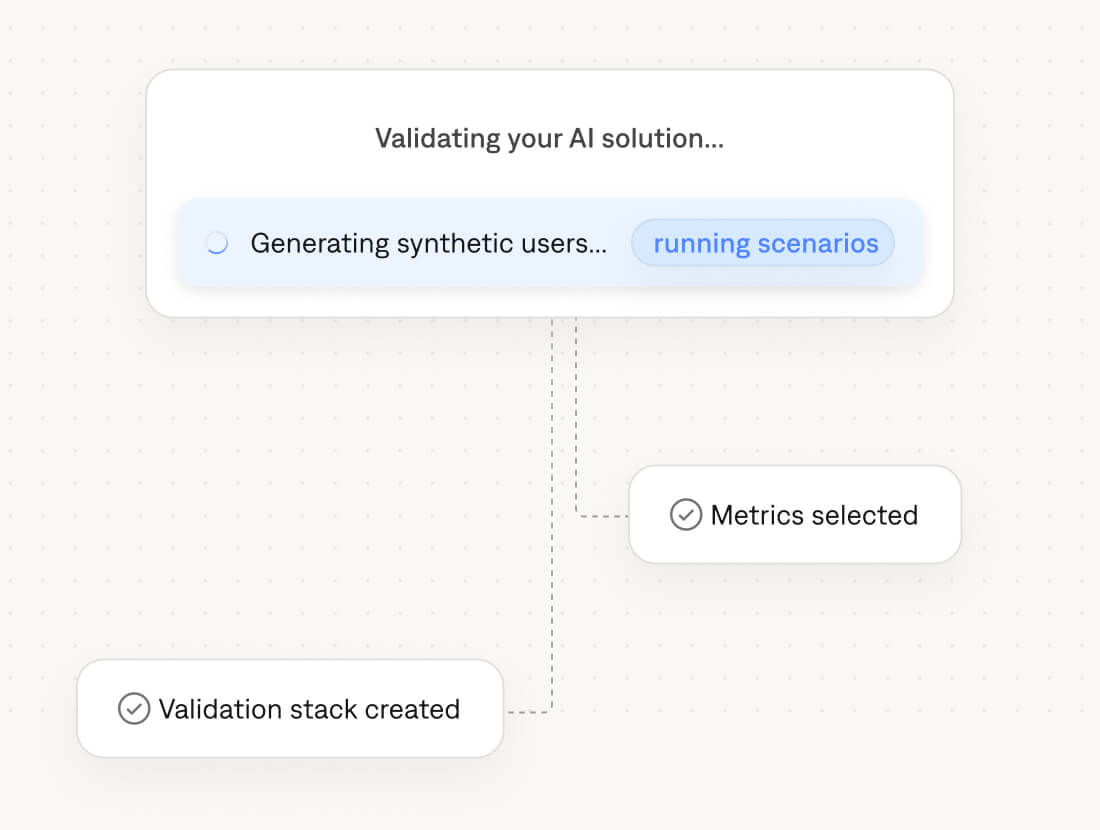

01

Onboard your solution

Effortlessly integrate your AI solution into Galtea’s platform, with a smooth, guided setup.

02

Generate synthetic users

Create large-scale, realistic synthetic user profiles to simulate diverse, real-world scenarios and stress-test your AI solution’s capabilities.

03

Run evaluation tasks

Conduct automated evaluation tasks that measure system performance, compliance, and robustness, leveraging customisable metrics for accurate results.

04

Analyze results

Access real-time insights and comprehensive reports on your AI solution’s performance, uncovering strengths and where to optimise.

05

Iterate

Refine and optimise your AI solution through continuous testing and feedback, ensuring enhanced reliability and performance with every iteration.

Built on serious infrastructure.

Backed by BSC

Founded by researchers from the Barcelona Supercomputing Center.

MareNostrum 5

R&D that runs on one of Europe's most powerful supercomputers, not off-the-shelf tooling.

Advanced AI research

Works with any AI models. No lock-in, no bias toward any provider.

AI governance & compliance

Evaluation built around emerging regulatory standards.

Backed by serious people

Trusted by the institutions building Europe's AI future

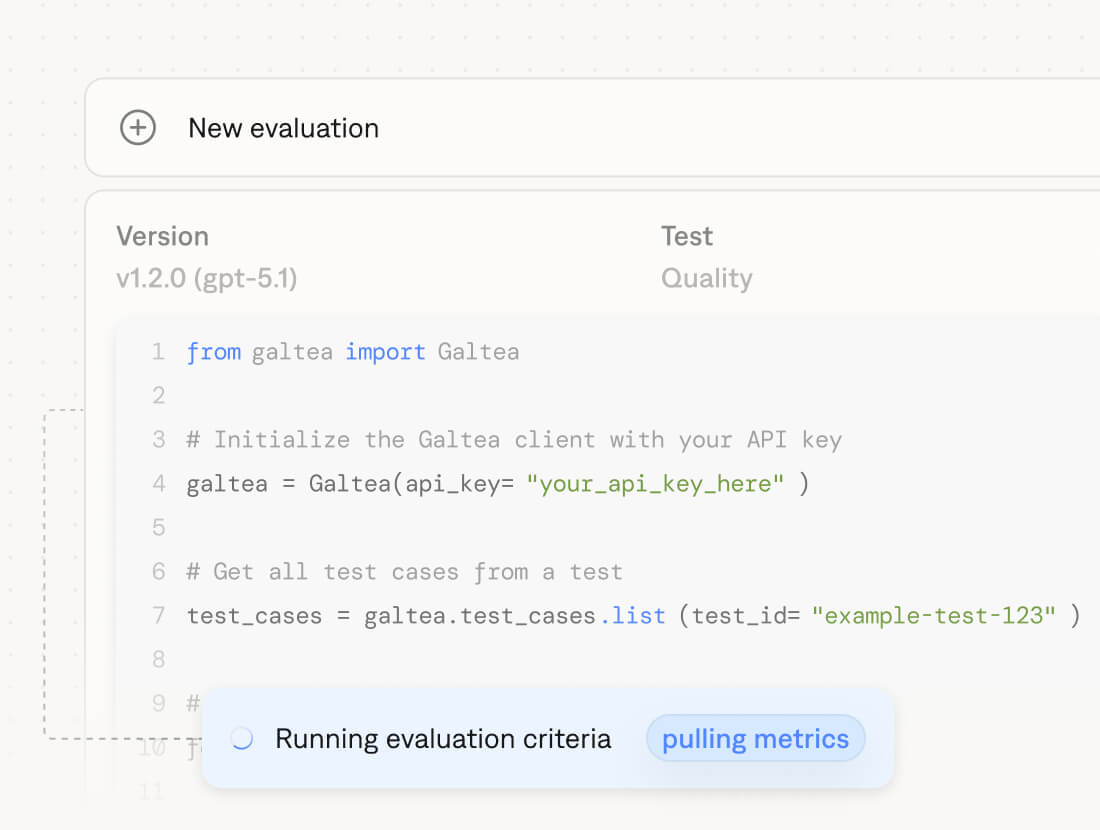

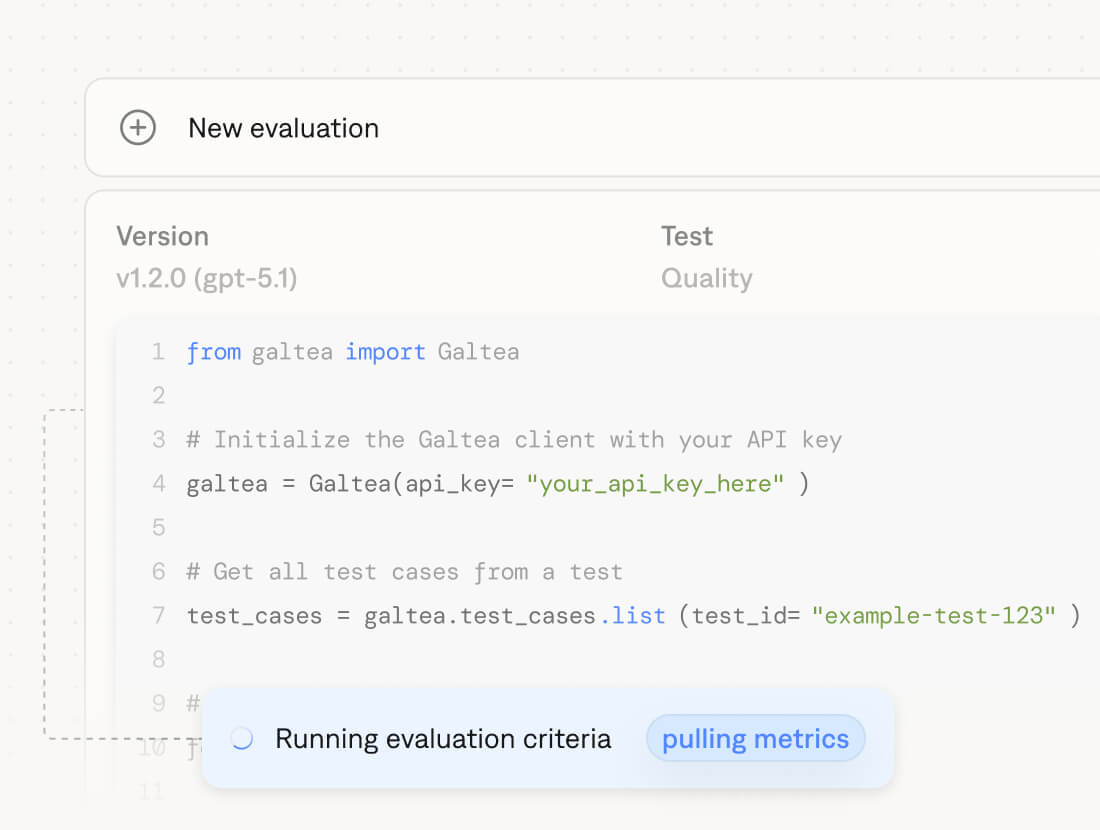

SDK, API, or Platform UI.

One tool for every team.

Integrate via Python SDK or REST API and gate deploys from your terminal. Or open the platform and browse results, compare versions, and share reports without writing a line of code. Same data. Different surfaces.

Python SDK: pip install galtea | CI/CD integration with automated deploy gates

Rest API: Language-agnostic, trigger evaluations from any stack

Web Platform: Browse results, compare versions, share audit-ready reports, no code needed

Shipping in a regulated industry?

Ai engineering support, infrastructure integration, compliance reporting, and business alignment. For financial services, healthcare, and telco teams that can't afford to get it wrong.

Dedicated AI engineering support throughout deployment

EU AI Act compliance reporting and audit-ready documentation

Infrastructure integration into your existing stack

Business alignment and stakeholder reporting built in