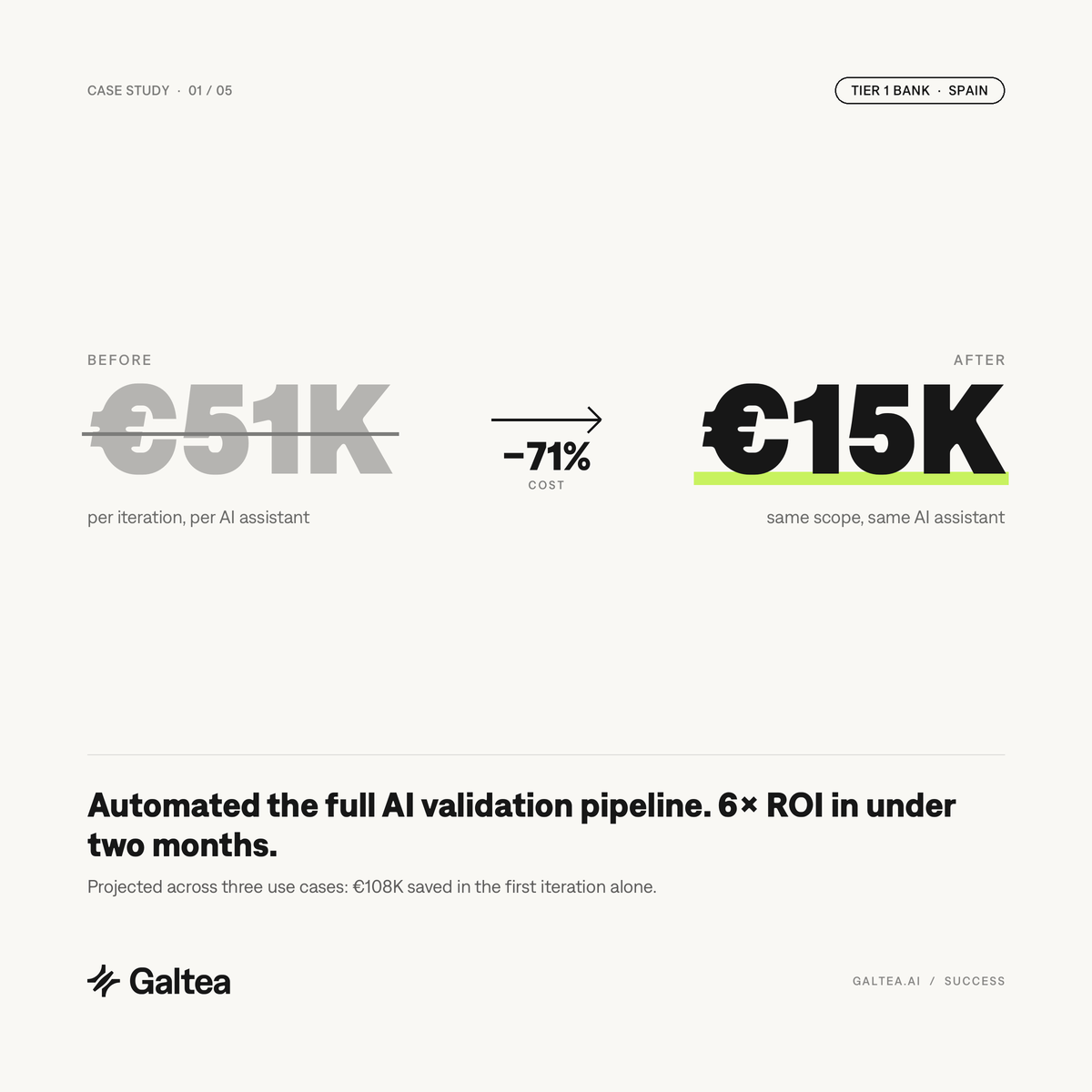

71% lower cost per AI validation cycle — at the same coverage, for a Tier 1 Spanish bank

A Tier 1 Spanish bank replaced manual, sampled validation of its customer-facing AI assistants with a continuous evaluation pipeline. Cost per iteration fell from €51K to €15K for the same scope, and the programme returned 6× ROI in under two months across three use cases.

Manual validation had a ceiling — and the bank was about to hit it three times over

The bank's AI validation process was built the way most banks still build it: manually, sampled, and expensive. Every iteration of a customer-facing assistant cost over €50K to validate. The cost didn't scale down with repetition — it scaled up with every new use case.

QA engineers hand-wrote test cases. Domain experts reviewed sampled conversations. Each review cycle took weeks. By the time an assistant was signed off for production, the underlying model had usually moved on. The team was validating yesterday's behaviour against today's production agent.

The gap wasn't in tooling discipline — it was in the economics. Banks running AI in regulated consumer products cannot afford to skip validation, but manual validation cannot cover every edge case in a reasonable time window. With two more assistants queued for deployment, the linear cost curve was about to become the bottleneck that decided which use cases shipped and which stayed on the roadmap.

Specification-driven evaluation, run continuously in CI

The bank replaced manual sampling with a continuous evaluation pipeline built on Galtea. Instead of writing tests case by case, the team encoded each assistant's expected behaviour as a specification: capabilities it must cover, inabilities it must refuse, policies it must follow, and boundaries it must respect.

From those specifications, Galtea generated the evaluation datasets and metrics automatically. The team reviewed the generated tests, extended them with a small set of bank-specific edge cases, and wired the pipeline into their CI/CD through the Python SDK. Every deployment candidate now scores against thousands of auto-generated tests before it ships. Conversations are traced end-to-end with the @trace decorator, so when an evaluation flags a regression, the team pulls the full agent execution, not just the final response.

The same pipeline runs LLM-as-a-judge for the qualitative dimensions that matter in a regulated banking context: tone, empathy, policy adherence, refusal behaviour on out-of-scope finance questions. Judges are calibrated with custom rubrics that encode the bank's own definition of correct behaviour, and judge agreement is reviewed monthly against sampled outputs to keep calibration drift in check.

“Manual validation always had a ceiling. We hit it at the first use case. Automating the pipeline let us past it — by the third use case, the savings had paid for the platform many times over.”

Three design choices that did most of the work

Specifications, not ad-hoc test cases

The bank stopped writing individual tests and started writing behavioural specifications — capabilities ("explain a product in terms a retail customer understands"), inabilities ("do not give personalised financial advice"), policies ("always include risk disclosure on investment products"), and boundaries ("refuse to estimate future market performance"). Tests are generated from the spec, so coverage scales with the spec instead of with QA hours.

Evaluations run in CI, not after the fact

Before this change, QA happened after the model was handed off for deployment. Now every candidate build triggers evaluations.run() against the full specification. Failing runs block the release the same way a failing unit test would. The feedback loop shortened from weeks to hours, which is the single biggest contributor to the cost reduction — it removed the hand-off queue, not just the manual labour inside it.

Judges calibrated for the bank, not for the internet

Generic LLM-judge prompts don't know what counts as a compliant refusal in a Spanish retail-banking context. The team wrote custom rubrics, grounded in the bank's compliance guidelines, and reviewed judge agreement on sampled outputs each month. That kept judge drift visible, and it caught regressions that off-the-shelf judges missed — including a set of false-positive approvals that would have shipped on the old manual process.

Manual validation is a first-use-case strategy

Most AI-validation programmes inside banks are sized for one model, one assistant, one use case. The economics collapse the moment a second or third use case enters the pipeline — which is where most teams will be inside the next 18 months.

Automating the pipeline is not a nice-to-have once a team is past the first deployment. It is the difference between a linear cost curve and a flat one, and it is the difference between shipping the third assistant and parking it on the roadmap. The €36K saved per iteration at this bank is not the headline. The headline is that the next two use cases landed without renegotiating the QA budget.

See what the same pipeline could do for your AI programme

Our team will walk you through the specification → tests → evaluation → analysis loop, mapped to the use cases you are already running.

Talk to the team →